Micron: A No-Brainer AI Bet

AI-fueled growth, US manufacturing, dividends, and share repurchases make Micron a low-risk, high-return AI opportunity

When people discuss AI, they usually think first of large language models like ChatGPT or Claude, or of chip designers like NVIDIA and AMD.

Some also recognize the opportunity in foundries like TSMC. What many still overlook is the critical role of memory.

The rise of AI has pushed computational demand to unprecedented levels, and growth remains exponential. Today, one of the largest bottlenecks in scaling AI accelerator output is no longer only foundry capacity or raw materials. It is HBM.

HBM, or High Bandwidth Memory, is the ultra-fast memory integrated into every leading AI accelerator. Without it, even the most advanced GPUs face performance constraints. Demand for this memory has surged, lifting growth across memory producers and leaving supply tight. That creates a significant opportunity for investors who want exposure to the AI buildout.

A Memory Powerhouse

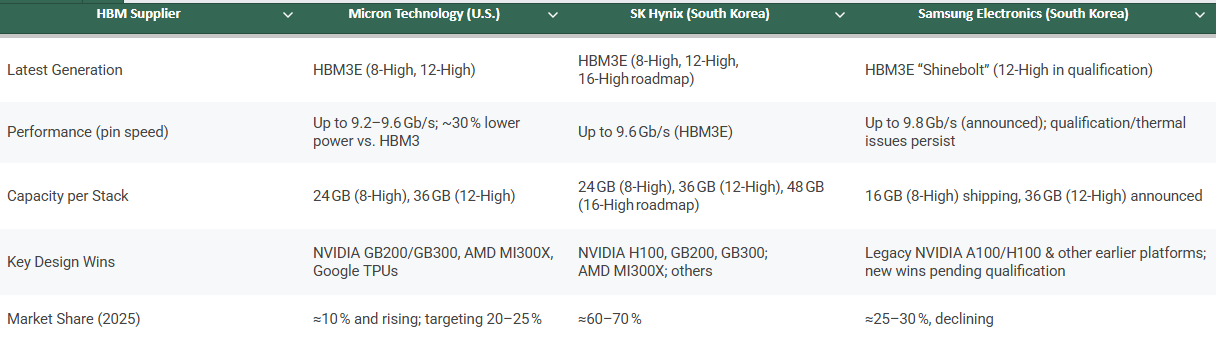

The HBM market is an oligopoly with three major players: Samsung, SK hynix, and Micron. Samsung, once the clear memory leader, has seen its position weaken as execution and R&D momentum shifted toward its peers.

While SK hynix has moved very quickly in HBM development, Micron is a close second and in some areas already ahead.

Its HBM3E is not only more power efficient, with around 20% lower power consumption versus competing alternatives, it also provides higher capacity per stack through its 12-high design, supporting roughly 50% more memory per stack in certain configurations. That is a major advantage in a world where capacity and thermal efficiency are becoming as critical as raw compute.

NVIDIA selected Micron as a key supplier for GB200 Grace Blackwell systems and for upcoming GB300 platforms, positioning Micron as a foundational vendor for next-generation AI infrastructure. That level of design-in reflects strong confidence in product quality and execution.

Micron did not stop with NVIDIA. It is also a key supplier to Google, AMD, and other major hyperscalers. The company has stated that its HBM output for calendar 2025 is fully sold out.

Here is a quick comparison of the three leading memory producers:

That level of demand has triggered a major investment cycle. Micron is responding by increasing capital expenditure to expand capacity and meet what management describes as a multi-year demand inflection.

This is not speculative growth. It is demand that already exists and is outpacing supply, which can support Micron’s expansion for years.

Beyond HBM

Micron’s growth is not only about HBM. AI systems depend on a full stack of memory technologies, and Micron is positioned across all of them. Data centers require DRAM for CPUs, GDDR memory for accelerators, and NAND flash for training data storage and model-serving workloads.

Here is a breakdown of the core memory categories powering AI and Micron’s position in each.

Demand across the memory stack is accelerating. Server adoption of DDR5 continues to rise as AI workloads increase memory intensity. NAND remains essential for data-heavy AI use cases, and GDDR is seeing broader use in high-performance graphics systems repurposed for AI tasks.

There is another important dynamic: HBM tightens the broader DRAM supply-demand balance. Each HBM package can consume up to 3x more silicon than DDR5 to produce the same number of bits, and future generations such as HBM4 could push that to around 4x. In practice, capacity allocated to HBM can constrain supply for other DRAM formats, which helps support pricing across traditional segments.

US-Based Production

Micron is the only United States company in the HBM oligopoly and holds the largest advanced memory manufacturing footprint in the country. That is a meaningful advantage in today’s geopolitical environment.

The United States is the largest consumer of AI compute, and being domestically anchored gives Micron stronger access to strategic demand, policy support, and supply-chain prioritization.

Micron is also leading one of the largest semiconductor projects in United States history: a planned 100 billion dollar investment to develop four fabs in upstate New York. The CHIPS and Science Act has already allocated 6.1 billion dollars in support for this expansion. At the same time, the company is building a major research and manufacturing center in Boise.

This is not a short-term story. AI accelerator demand is expected to grow sharply over the next decade.

And the opportunity does not end in data centers. Laptops, smartphones, and PCs will require more memory as on-device AI adoption expands.

Inference is moving to the edge, and that means higher memory demand across the entire device landscape.

The Market is Still Mispricing Micron

Despite all of this, Micron trades at roughly the same level as in November 2020, before ChatGPT launched. Today it sits around 66 dollars, far below its 2024 high near 142 dollars. Many investors remain cautious because of memory cyclicality, macro concerns, and United States-China tensions.

But those concerns increasingly look overstated.

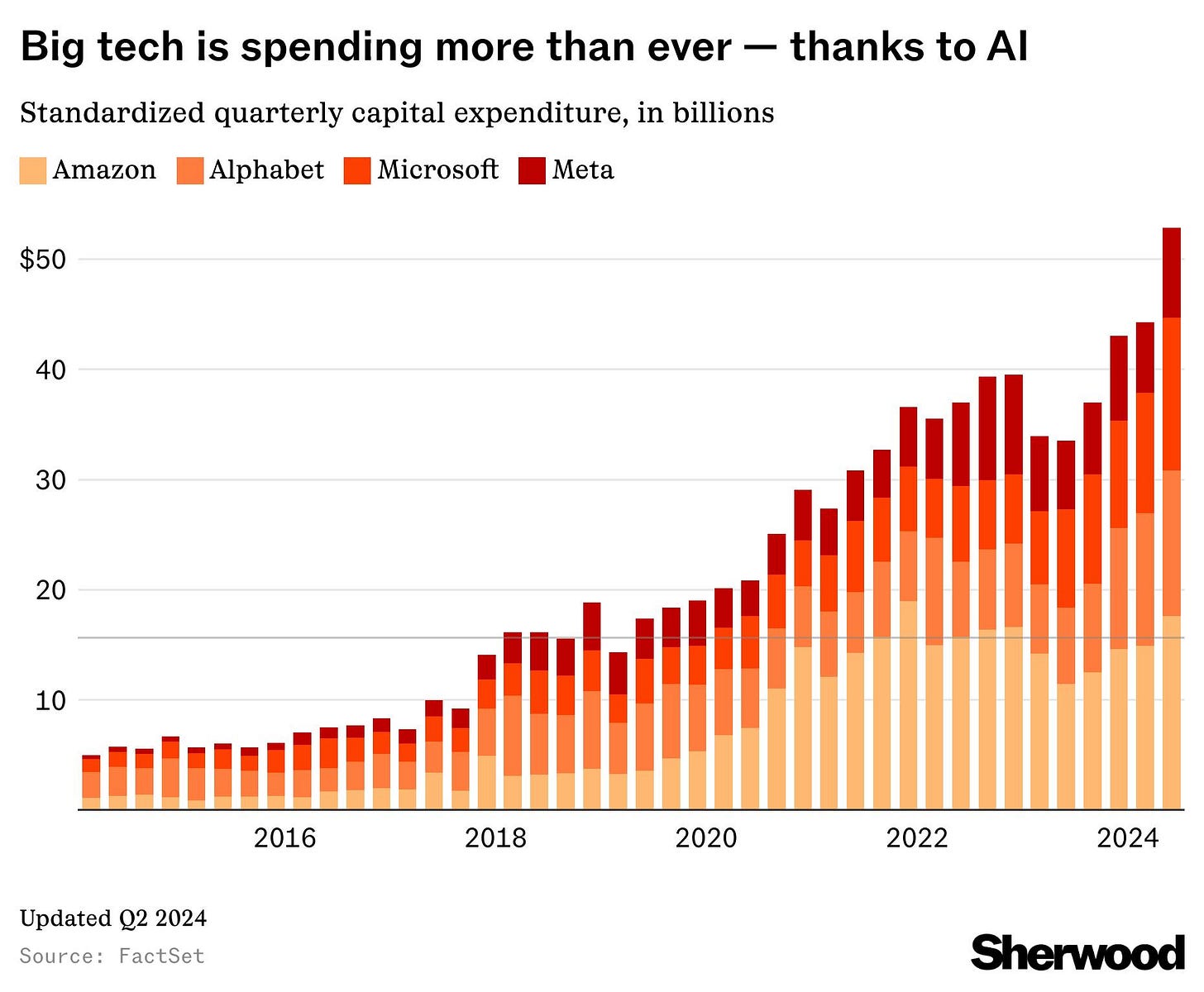

AI compute demand is not slowing. Executives at Microsoft and Amazon have said this clearly. OpenAI raised 40 billion dollars, bringing its valuation to 300 billion dollars. GPU cloud startups continue to emerge, and companies across the market are racing to secure compute capacity.

Some argue that new Chinese models or players like DeepSeek could flood the market and slow growth. That view ignores the Jevons paradox: as AI becomes more productive and cheaper to use, demand for infrastructure often rises, not falls, because returns improve. Memory sits at the center of that infrastructure.

Others focus on geopolitical shocks.

Could a trade war cause a downturn?

Yes, it is possible.

But the global economy already absorbed a pandemic that shut down entire countries. Employment has remained relatively resilient, inflation has eased from peak levels, and consumption and investment have held up. If the world adapted to COVID, it can adapt to tariffs.

Most likely, agreements will eventually be reached and the cycle will turn. Investors locked into worst-case narratives risk missing the bigger picture: AI is here to stay, and billions of users interacting with AI require massive compute, including memory.

So what is the real risk in buying Micron?

Let’s break it down.

Will AI keep improving and lifting productivity? Yes.

Will AI capital spending continue rising to meet demand? Yes.

Will billions of people eventually use AI daily? Most likely yes.

So what is the real risk in buying Micron?

Some point to competition, while others argue the stock was simply overvalued before and is now fairly priced. But when you look at the financial profile in detail, the opportunity looks clearer.

Financials

High Growth

Micron just reported the strongest earnings period in its history. Operating cash flow reached an all-time high of 3.94 billion dollars, equal to 49% of revenue. This is a business generating substantial cash.

Only 857 million dollars converted into free cash flow in the quarter, mainly because net capital expenditure was a very high 3.1 billion dollars. That investment intensity reflects an aggressive expansion of capacity and technology.

This level of capital deployment, combined with Micron’s leadership in advanced memory and United States manufacturing expansion, supports significant long-term value creation.

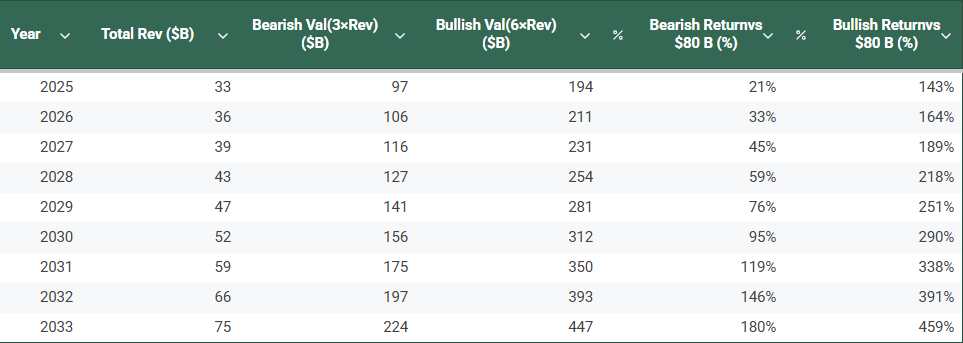

Bloomberg Intelligence projects the HBM market could reach around 130 billion dollars by 2033, with Micron at roughly 23% market share.

That alone would imply about 30 billion dollars in revenue, roughly equal to Micron’s current annual revenue, but concentrated in a significantly higher-margin segment.

On top of that, pricing power from supply tightness, margin expansion from scale and United States manufacturing, plus growth in other memory categories such as DRAM, NAND, and managed NAND, driven by data center expansion and rising memory demand from AI-enabled PCs and gaming, could push Micron toward 75 billion dollars in revenue.

Here is the logic behind that:

Valuation

At that revenue level, and using today’s price-to-sales multiple of 2.78, Micron would be valued at roughly 208.5 billion dollars. That is more than double its current market value and implies around a 12% compound annual return, which is above the long-term average return of the S&P 500. This does not include the added benefit of share repurchases and dividends.

This estimate uses the current, still-depressed multiple shaped by macro uncertainty. It also does not yet reflect potential margin expansion as HBM becomes a larger share of total revenue, or the manufacturing efficiencies that could come from United States capacity expansion.

For context, Micron traded at a 6.46 price-to-sales multiple at the beginning of last year. If margins improve and sentiment turns more constructive, upside could be materially higher. If AI adoption accelerates across sectors, through automation, autonomous systems, and broader deployment of AI-enabled products, memory demand, especially for HBM, could exceed current projections.

This table shows the simplified valuation model:

Micron also maintains a healthy balance sheet, with 7.5 billion dollars in cash and equivalents, around 15 times its short-term debt, and 49 billion dollars in equity. The stock trades at just 1.67 times book value, with very low levels of goodwill and intangible assets. Its price-to-tangible-book value is only 1.80.

The company is also committed to returning capital to shareholders. Between fiscal year 2022 and fiscal second quarter 2025, Micron spent 3.2 billion dollars repurchasing 47 million shares and paid 1.7 billion dollars in dividends. In total, it returned 4.9 billion dollars to shareholders.

Risks

As with any company, Micron faces risks, and no analysis is complete without addressing them. Here are the key ones:

Tariffs

While Micron manufactures a significant share of its products in the United States and is investing heavily there, it also operates key foundries in Taiwan, Singapore, and Japan. A trade war that disrupts exports could materially affect the business. Tariffs remain a significant risk across the industry, and Micron is not exempt.Macro uncertainty

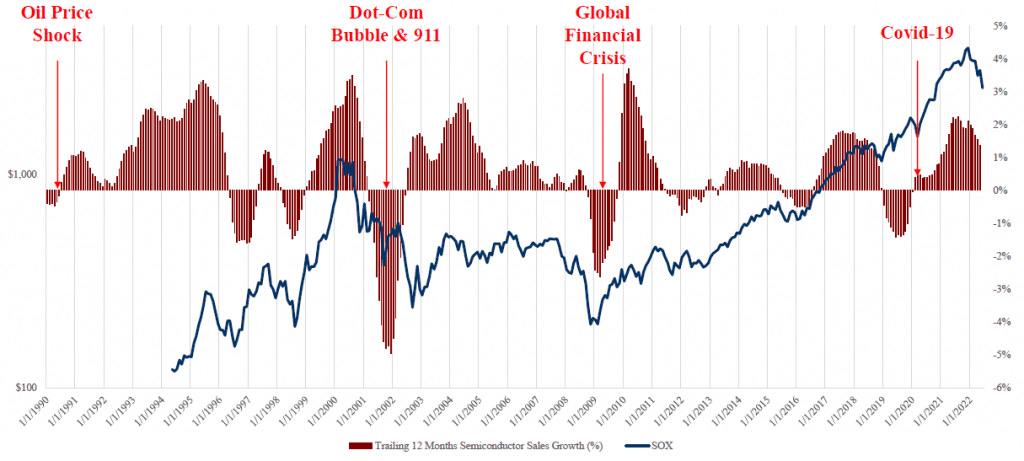

Ongoing concerns such as tariffs, weaker consumer demand, and the potential return of inflation driven by trade tensions create an uncertain macro environment. These factors could trigger a recession. In that scenario, consumer demand could soften, companies might delay capital expenditure plans, and discretionary spending would likely decline. Even a mild recession would likely pressure Micron’s stock, along with the broader market.Cyclicality

The semiconductor industry is structurally cyclical, and historical data clearly reflects that pattern.

These cycles are driven by waves of capex investment and the sensitivity of demand to macroeconomic trends, particularly discretionary spending. This cyclicality is difficult to predict with precision. Current forecasts suggest that we are not at the peak of the cycle and that the growth phase may continue for at least another year, backed by large-scale capex plans from major corporations. Still, this remains uncertain, and it's a factor investors should always consider when evaluating semiconductor stocks.

Conclusion

Memory will remain a major AI bottleneck for a long time, and Micron is investing heavily in both R&D and manufacturing capacity to stay ahead of this demand surge. With the largest advanced memory manufacturing footprint in the United States, Micron is uniquely positioned to lead at scale in high-performance memory.

When you invest in Micron, you are not only backing a company accelerating innovation at the frontier of memory technology. You are also backing a United States-based manufacturer with scale, strategic relevance, and geopolitical advantages that can support a premium position in the global semiconductor market.

In summary, Micron is a strong candidate to outperform the broader market over the long term. This is not just exposure to a technology trend, it is exposure to a United States company producing advanced memory essential to unlocking AI’s full potential.

How would you compare MU vs SNDK?

"This cyclicality is difficult to predict with precision. Current forecasts suggest that we are not at the peak of the cycle and that the growth phase may continue for at least another year, backed by large-scale capex plans from major corporations."

what forecasts? This actually seems like the biggest risk IMO. Unless your plan is to trade this within a year