Micron: A No-Brainer AI Bet

AI-fueled growth, US manufacturing, dividends, and share repurchases make Micron a low-risk, high-return AI opportunity

When people discuss AI, they usually think first of large language models like ChatGPT or Claude, or of chip designers like NVIDIA and AMD.

Some also recognize the opportunity in foundries like TSMC. What many still overlook is the critical role of memory.

The rise of AI has pushed computational demand to unprecedented levels, and growth remains exponential. Today, one of the largest bottlenecks in scaling AI accelerator output is no longer only foundry capacity or raw materials. It is HBM.

HBM, or High Bandwidth Memory, is the ultra-fast memory integrated into every leading AI accelerator. Without it, even the most advanced GPUs face performance constraints. Demand for this memory has surged, lifting growth across memory producers and leaving supply tight. That creates a structural shortage that is unlikely to resolve anytime soon.

A Memory Powerhouse

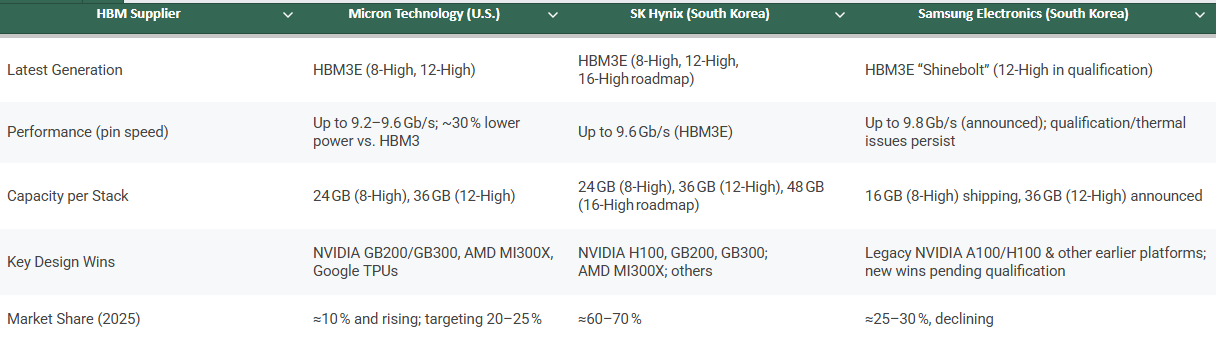

The HBM market is an oligopoly with three major players: Samsung, SK hynix, and Micron. Samsung, once the clear memory leader, has seen its position weaken as execution and R&D momentum shifted toward its peers.

While SK hynix has moved very quickly in HBM development, Micron is a close second and in some areas already ahead.

Its HBM3E is not only more power efficient, with around 20% lower power consumption versus competing alternatives, it also provides higher capacity per stack through its 12-high design, supporting roughly 50% more memory per stack in certain configurations. That is a major advantage in a world where capacity and thermal efficiency are becoming as critical as raw compute.

NVIDIA selected Micron as a key supplier for GB200 Grace Blackwell systems and for upcoming GB300 platforms, positioning Micron as a foundational vendor for next-generation AI infrastructure. That level of design-in reflects strong confidence in product quality and execution.

Micron did not stop with NVIDIA. It is also a key supplier to Google, AMD, and other major hyperscalers. The company has stated that its HBM output for calendar 2025 is fully sold out.

Here is a quick comparison of the three leading memory producers:

That level of demand has triggered a major investment cycle. Micron is responding by increasing capital expenditure to expand capacity and meet what management describes as a multi-year demand inflection.

This is not speculative growth. It is demand that already exists and is outpacing supply.

The Shortage Is Structural

This is where most people underestimate what is happening.

HBM is not just another memory product. It is extraordinarily difficult and expensive to produce. Each HBM package can consume up to 3x more silicon than DDR5 to produce the same number of bits. Future generations such as HBM4 could push that ratio to around 4x.

That means every additional HBM package that rolls off a production line pulls wafer capacity away from traditional DRAM. The more HBM the industry builds, the tighter supply gets across the entire memory stack.

This is not a temporary imbalance. It is a structural constraint baked into the physics and economics of memory manufacturing.

There are only three companies on Earth capable of producing HBM at scale. Building a new fab takes years and billions of dollars. Even with aggressive expansion plans, the gap between supply and demand is widening, not closing.

NVIDIA’s next-generation platforms require more HBM per chip. AMD is scaling its MI series with larger memory footprints. Google, Microsoft, Amazon, and Meta are all expanding their custom silicon programs, each of which demands HBM. Every new AI model is larger, every inference cluster is denser, and every deployment cycle consumes more memory than the last.

The result is a supply environment that strongly favors producers.

Memory Prices Are Heading Higher

When supply is constrained and demand is accelerating, prices rise. That is exactly what is playing out across the memory market.

HBM pricing has already moved sharply higher, and as supply remains tight through 2025 and 2026, prices across the memory stack are likely to keep climbing. But the pricing pressure will not stop at HBM.

Because HBM production absorbs disproportionate wafer capacity, it constrains the supply of conventional DRAM as well. Server DRAM, mobile DRAM, and PC DRAM all compete for the same manufacturing base. As more capacity shifts toward high-value HBM, supply for these other segments tightens and pricing firms across the board.

DDR5 adoption is accelerating as AI workloads increase memory intensity. NAND remains essential for data-heavy AI use cases, from training data storage to model-serving workloads. GDDR is seeing broader use in high-performance graphics systems repurposed for AI tasks. Demand is rising on every front, and the supply side cannot keep up.

This dynamic is self-reinforcing. Higher HBM demand leads to tighter DRAM supply, which supports higher prices across all memory categories, which improves margins for producers, which funds more HBM expansion, which consumes more wafer capacity, which tightens supply further.

It is a cycle that favors producers for as long as AI infrastructure buildout continues. And that buildout shows no sign of slowing.

Beyond HBM

Keep reading with a 7-day free trial

Subscribe to Daniel Romero to keep reading this post and get 7 days of free access to the full post archives.