Can AMD beat NVIDIA?

NVIDIA has maintained a dominant position for years, but AMD is taking the steps needed to challenge that leadership

NVIDIA has dominated the AI accelerator market for years. With around 90% share in AI data center GPUs, a massive software advantage through CUDA, and powerful rack-scale systems like NVLink and NVSwitch, it has built one of the strongest competitive positions in the industry and grown into the largest company in the world.

But 90% market share never lasts forever. Just ask Intel.

That dominance is now starting to show some cracks. Supply has been constrained for years, pricing remains extremely high, and when a company pushes its customers too hard for too long, those customers eventually start looking for alternatives.

That is where AMD entered the picture.

AMD is no longer satisfied with being a distant second. It wants to become a real alternative at scale, and for the first time in years, it is building a credible case.

From laggard to contender

AMD was painfully late to the AI accelerator race. While NVIDIA was already shipping products like the H100 and H200, AMD had nothing truly competitive in the market. Even when the MI300X arrived, hardware was not the main issue. Software was. ROCm was still immature, not fully open, and the broader AI stack was not ready for prime time.

The result was obvious: AMD remained a very distant second.

That is now changing, and changing fast. AMD is no longer just trying to correct past mistakes. It is building the foundation of a much broader AI platform, one that could eventually weaken part of NVIDIA’s moat.

The company has now open-sourced ROCm, acquired Silo AI and Nod.ai, and is using those assets to accelerate software development. Nod.ai, in particular, is now fully focused on improving ROCm and helping AMD close one of the most important gaps in the stack. At the same time, AMD has been expanding its AI software organization aggressively.

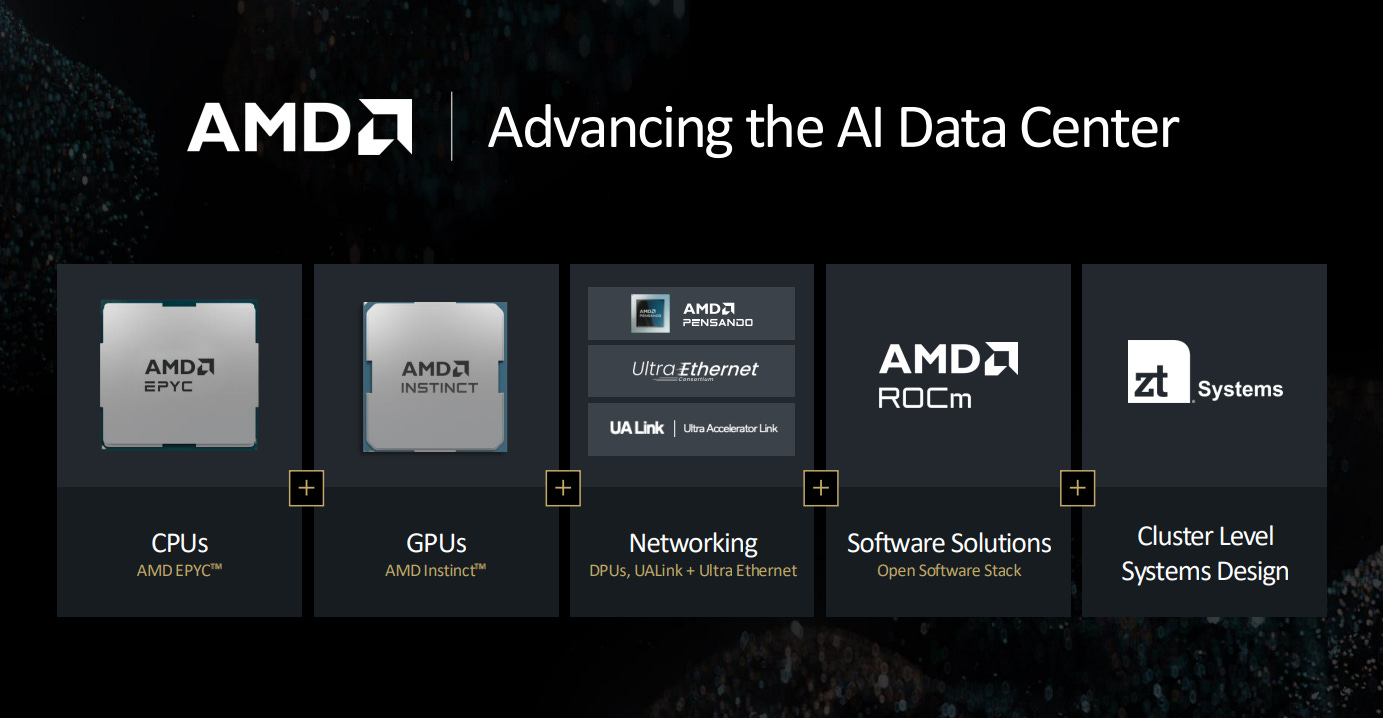

On the hardware side, AMD is also moving with far more urgency. The MI355X and MI400 series are designed to materially improve its competitive position, while acquisitions such as Pensando, Xilinx, and ZT Systems strengthen AMD across networking, adaptive compute, and rack-scale infrastructure.

This is what makes the story more interesting now. AMD is no longer approaching AI as just a chip company. It is trying to become a full-platform competitor.

Beating NVIDIA in inference

Inference is likely to become the largest source of AI compute demand. That view is increasingly shared across the industry, and some estimates suggest inference could eventually be many times larger than the training market. If that is directionally correct, the opportunity in front of AMD is enormous.

The logic is simple. Running large language models, personalized assistants, image generation, video generation, enterprise copilots, AI agents, and robotics will require an immense amount of inference compute. If AI becomes embedded across software, devices, and physical systems, the demand for efficient inference hardware will be massive.

That is one of the reasons AMD is putting so much emphasis on this part of the market.

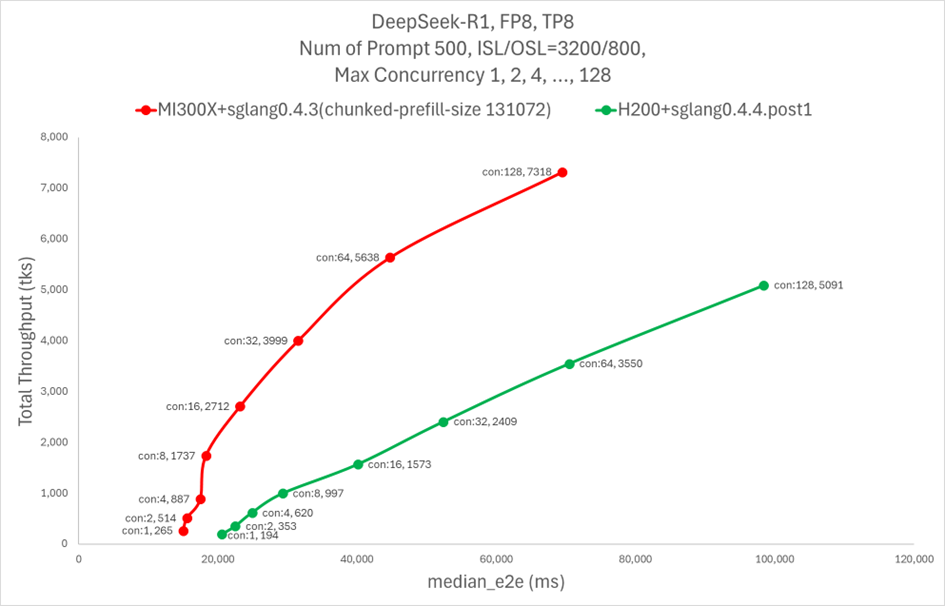

A good example is DeepSeek. AMD has shown that the MI300X can deliver more than double the DeepSeek throughput of NVIDIA’s H200, improving from 6,484.76 to 13,704.36 tokens per second after introducing AITER, its optimized AI kernel library. That kind of gain is notable because it shows how much room there still is for software improvements when the stack is properly optimized.

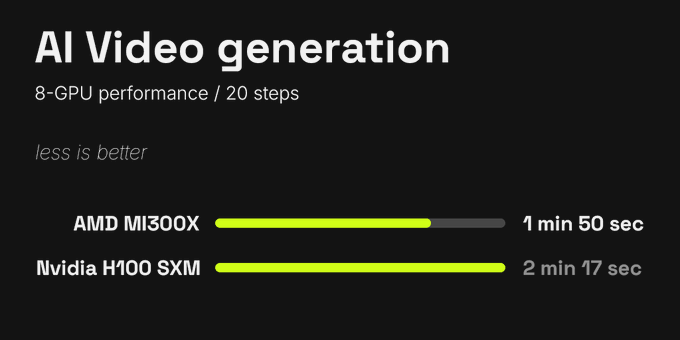

Another example comes from Higgsfield AI, one of the leading startups in video generation. The company reported a meaningful performance advantage with AMD chips over NVIDIA’s, with roughly 25% faster performance in its workload.

And when both performance and cost are taken into account, Higgsfield found that AMD GPUs are about 40% more cost-effective for video inference than NVIDIA’s.

CEO Alex Mashrabov even put it bluntly:

Jensen Huang said that, in the future, all pixels will be generated rather than rendered. What he may not have expected is that a meaningful share of that generation could happen on AMD GPUs, not NVIDIA’s.

These are just a few examples from Higgsfield’s testing, but they point in the same direction: AMD’s performance advantage in this workload is becoming increasingly clear.

AMD’s hardware is becoming far more competitive, and its software stack is improving at a pace that would have seemed unlikely not long ago.

And this still feels like the early stage of the story.

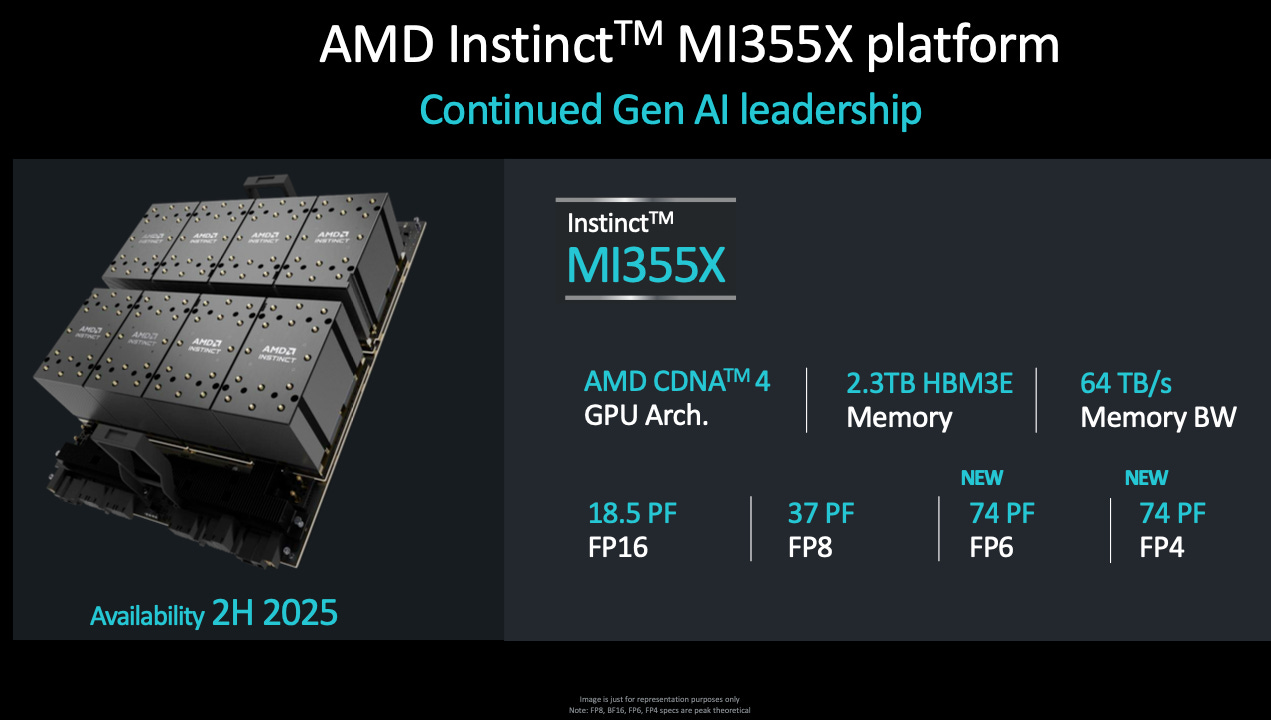

MI355X and MI400: Next-generation firepower

The strong performance of the MI300X has already translated into meaningful demand, and that momentum appears to be carrying into the upcoming MI325X. The MI300X is already being used in major deployments, including Microsoft Copilot and Meta’s Llama workloads, and it also powers El Capitan, currently the most powerful supercomputer in the world. AMD has also said that its new MI325X accelerators are being used to support ChatGPT-based Microsoft Copilot.

AMD’s accelerator footprint is also expanding across the cloud. Its GPUs are now available through major platforms such as Oracle, IBM Cloud, Microsoft Azure, and DigitalOcean. At the same time, demand is growing among independent AI cloud providers like TensorWave, Vultr, Hot Aisle, and RunPod.

That matters because it shows AMD is no longer limited to a few isolated wins. Its presence is spreading across both large cloud providers and the broader AI infrastructure ecosystem.

As successful as AMD’s earlier accelerators have been, with the Instinct lineup becoming the fastest-growing product family in the company’s history, the most important products are still ahead. Later this year, AMD is expected to launch the MI355X, a chip it says could deliver up to 35 times the inference performance of the previous generation. That puts it directly against NVIDIA’s Blackwell and Blackwell Ultra.

Oracle has already committed billions to a 30,000-GPU cluster based on the MI355X, making it one of the clearest and most important validations AMD has received so far in the AI infrastructure race.

Looking further ahead, AMD plans to launch the Instinct MI400 APU in 2026, built on a new architecture and designed to incorporate technology and capabilities strengthened by the Xilinx acquisition. If execution is strong, this could give AMD a meaningful advantage in more programmable AI workloads across edge, embedded, and data center environments.

UALink: Challenging the NVLink moat

One of NVIDIA’s most underappreciated advantages is NVLink, its custom interconnect technology that allows GPUs to communicate at very high speed with low latency across large clusters. It is a core part of the architecture behind NVIDIA’s rack-scale AI systems and one of the reasons the company has been able to sell integrated solutions at such high prices.

That advantage is now facing a more serious challenge.

Enter UALink, a new open standard backed by a powerful consortium that includes AMD, Google, Microsoft, Meta, Apple, Broadcom, Amazon, and Intel, among others. Built on contributions tied to AMD’s Infinity Fabric and Broadcom’s networking expertise, UALink is designed to offer many of the same benefits as NVLink while pushing the industry toward a more open ecosystem.

According to the published UALink 1.0 specification, the standard supports:

Up to 1,024 accelerators within a single fabric

Up to 800 Gb/sec per port, configurable in x1, x2, or x4 links

Sub-microsecond round-trip latency at rack scale

Massive power and cost advantages relative to Ethernet and PCIe in certain AI cluster configurations

If UALink delivers on its promise, it could become one of the first real attempts to weaken NVLink’s grip on large-scale AI infrastructure. At a minimum, it gives hyperscalers and system builders a credible open alternative. In a more aggressive scenario, it could also increase pressure on NVIDIA to support broader industry standards rather than keeping customers inside a tightly controlled ecosystem.

That would be a meaningful shift in AI data center architecture, and AMD is right at the center of it.

Busting the CUDA moat

NVIDIA did not win this market on hardware alone. A huge part of its dominance came from building a powerful software ecosystem around CUDA, cuDNN, and deep integrations across the AI stack.

That is still one of NVIDIA’s biggest advantages.

But AMD is making real progress here as well.

ROCm is now open source, which gives AMD a much better position to work with the broader developer community and accelerate adoption across open AI frameworks.

The company is heavily focused on inference optimization, and that progress is already starting to show. The performance gains seen with DeepSeek through AITER are one early example, and AMD is clearly pushing to improve inference performance across a much wider range of models and workloads.

AI kernel performance has improved rapidly, with AMD showing major gains in only a matter of months.

Nod.ai is now fully dedicated to strengthening and optimizing AMD’s software stack.

AMD is also investing aggressively in software talent. Chris Sosa, Director of Engineering for AI Software at AMD, has outlined an ambitious plan to keep scaling the team at a very fast pace.

If AMD keeps improving ROCm’s usability, fixing bugs, expanding compatibility with machine learning libraries, and extracting more performance from its hardware, it can become far more competitive in inference at scale. And if that happens, one of NVIDIA’s most valuable advantages, its software-driven pricing power, starts to weaken.

AMD needs to scale

So far, one of AMD’s biggest weaknesses has been the lack of a complete rack-scale solution. It did not have a true NVSwitch equivalent, no UFM, no NVLink, and no fully integrated system architecture that could compete head-on with NVIDIA’s end-to-end offering.

That gap has mattered.

But it is now starting to close.

AMD’s next phase is built around a more complete infrastructure stack:

ZT Systems is expected to deliver full-rack, UALink-based server designs

Pensando DPUs and NICs are being integrated into AMD’s rack-scale systems

The upcoming MI355 and MI400 accelerators are being designed to scale more efficiently across larger system deployments

If AMD executes well, these systems could become a much stronger alternative to NVIDIA’s DGX lineup, especially when combined with AMD’s strengths in chiplet design and platform flexibility.

The broader point is simple. AMD took AI seriously too late, and that delay allowed NVIDIA to build a massive lead. For a long time, AMD lacked a credible software stack, competitive accelerators, a high-performance interconnect, and a scalable server and rack-level architecture.

That is why the story looked so one-sided.

But this year, the setup looks very different. AMD is launching a new generation of accelerators beginning with the MI355X, built on a more competitive architecture. ROCm has improved significantly, inference performance is becoming much more compelling, and ZT Systems is helping AMD move toward a more complete server and rack-scale offering supported by Pensando networking and UALink.

AMD still has a lot to prove, but for the first time, it is starting to build the kind of full-stack AI platform that can compete more seriously at scale.

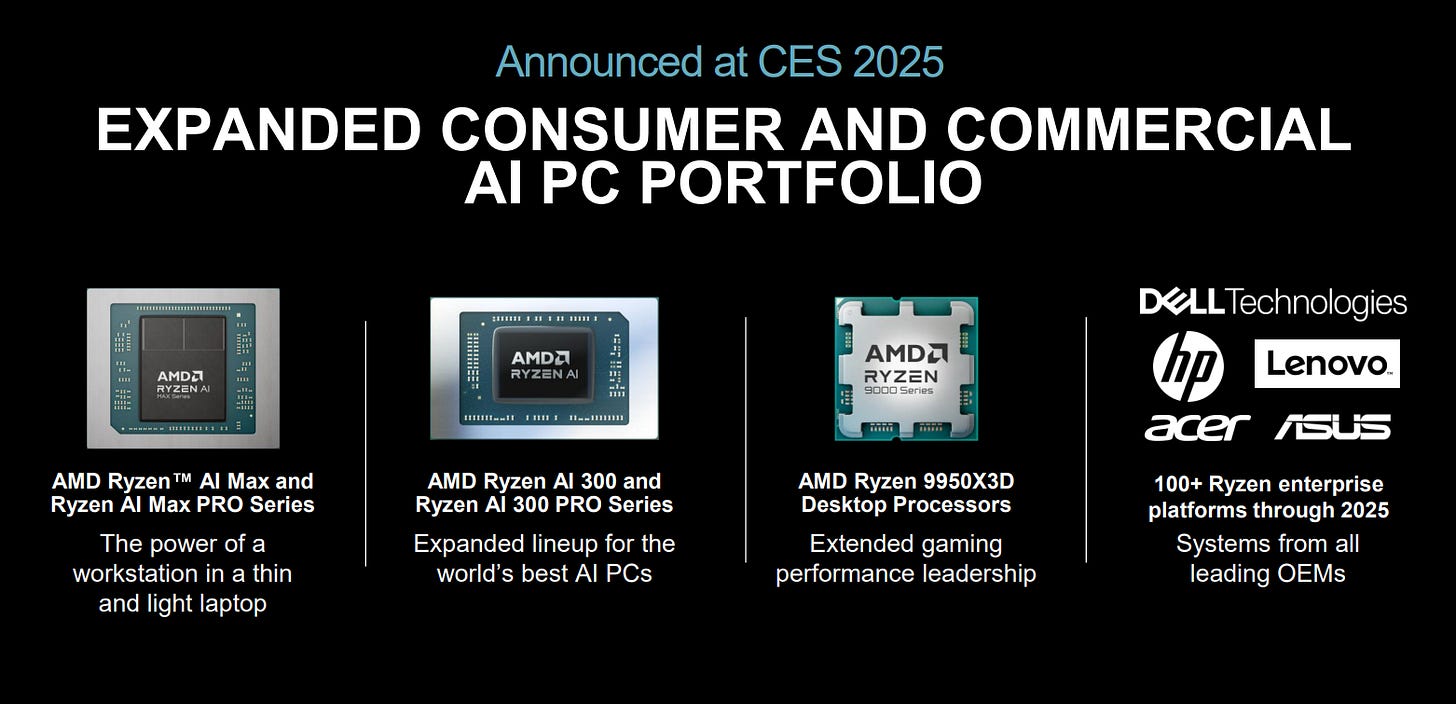

Beyond the data center: AI everywhere

AMD is not limiting its AI ambitions to cloud GPUs. It is also pushing AI capabilities across a much broader range of devices and form factors.

Ryzen AI APUs are now available in laptops and desktops, combining CPU, GPU, and NPU in a single SoC, which makes them well suited for local AI workloads and on-device LLM inference

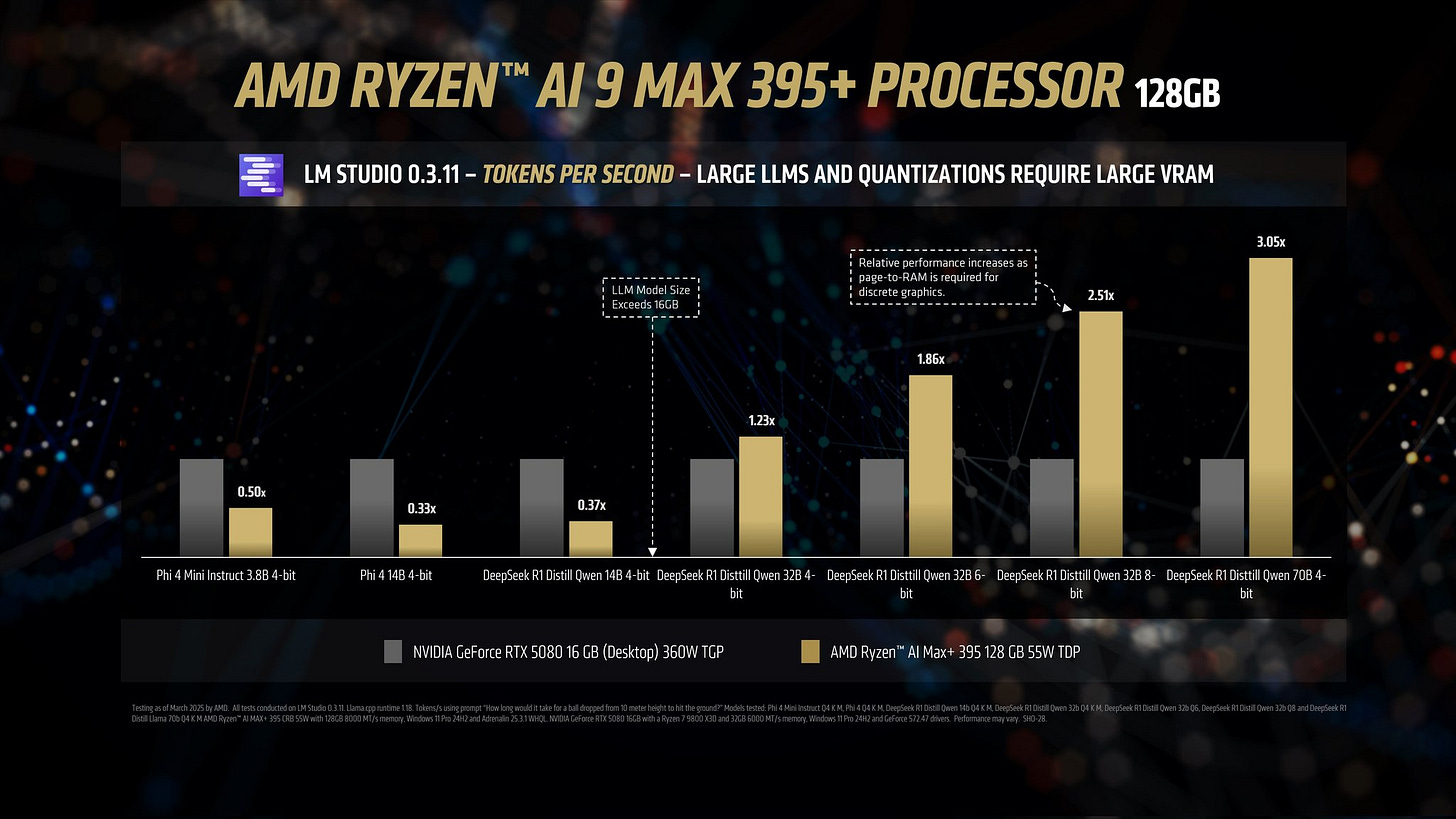

These new APUs have delivered impressive results across general-purpose computing, gaming, and AI inference. Strix Halo, the most powerful model in the new Ryzen AI lineup, has even benchmarked at more than 3x the performance of the RTX 5080 in DeepSeek R1 AI tests:

Strix Halo was chosen by Framework to power its new desktop system, a product designed to compete directly with NVIDIA’s Project DIGITS. At roughly $2,000, it offers very strong specifications at a meaningfully lower price point than DIGITS, which is expected to launch above $3,000.

It is one of the most impressive products AMD has released in years, and it strengthens the case that the company can build highly competitive AI systems not only for the client market, but eventually at the data center level as well.

Xilinx FPGAs are also being adapted for AI-focused edge workloads such as drones, surveillance, 3D printing, and traffic systems

The upcoming MI400 APU is expected to bring more of that FPGA-driven flexibility into next-generation AI accelerators, which could give AMD an advantage in workloads that require more tailored and adaptable system designs

And this part of the story is still underappreciated. With the Xilinx acquisition, AMD now controls the largest FPGA portfolio in the semiconductor industry. That gives the company a level of optionality in adaptive and specialized compute that Wall Street still does not seem to fully value.

Xilinx: The underappreciated giant

Xilinx is the global leader in FPGAs, and its acquisition by AMD was the largest in semiconductor history. What makes FPGAs so valuable is their flexibility. Unlike fixed-function chips, they can be reprogrammed for different workloads, which makes them useful across a wide range of applications.

That versatility matters because many of the markets where FPGAs are most relevant are also markets with strong long-term growth potential, including aerospace, telecommunications, robotics, surveillance, drug discovery, and data centers. One example is Starlink, which began using AMD Versal FPGAs in its newer generation of satellites.

AMD is also starting to pull more of that technology into the rest of its portfolio. Its newer Versal and Ryzen AI products include NPUs built on the XDNA architecture, helping improve both compute efficiency and power efficiency for AI workloads.

Looking further ahead, Xilinx could also become more important in the data center. The upcoming MI400 series is expected to incorporate more advanced Xilinx-derived capabilities, and that opens the door to more adaptive and specialized system designs over time.

As AMD continues to integrate Xilinx more deeply across its product stack, it is not just improving its architecture. It is also expanding its reach into more end markets and building a competitive position that extends beyond traditional CPUs and GPUs.

AMD is on the right path

AMD is playing the long game, and it has a strong foundation to build on.

The company already offers some of the best CPUs in the market across multiple tiers, including the data center, where EPYC has gained major traction and become a serious force among hyperscalers. AMD also has deeper experience with chiplet architectures, which gives it an important advantage when designing increasingly complex SoCs for AI and high-performance compute. Compared with NVIDIA’s more traditional design approach, AMD has meaningful architectural strengths in this area.

Now the company is trying to build on that base to become a serious leader in AI as well.

AMD may have entered the accelerator race late, but the pace of execution is now far more aggressive. The company is preparing to launch multiple new chips based on new architectures within a relatively short period of time, something that is highly unusual in the semiconductor industry.

At the same time, AMD has expanded strategically through acquisitions that strengthen its position across the full AI stack:

Xilinx adds deep FPGA expertise, strengthens AMD’s broader product portfolio, and expands its reach into attractive markets such as space, defense, and robotics

Pensando improves AMD’s system-level capabilities through advanced DPUs and NICs, which are increasingly important for efficient networking and data movement inside AI infrastructure

ZT Systems gives AMD the ability to bring together its chips, networking hardware, software, and server design into more tightly integrated systems

Together, these moves give AMD a much more credible path toward becoming a full-platform AI company rather than just a component supplier.

Conclusion: Keep an eye on AMD

A lot is beginning to come together, and this year could mark an important turning point.

The MI355X will be one of the first major products to reflect AMD’s broader strategy, combining stronger accelerator performance with tighter system integration and a more complete server-level approach. If execution is strong, it could become an important proof point for the company’s AI ambitions.

Then comes MI400 in 2026, which could push AMD another step forward if the architecture delivers as expected.

NVIDIA’s market cap is currently 18 times higher than AMD’s, a gap that will become much harder to justify if AMD continues to execute successfully on its roadmap.

NVIDIA may still lead today, but AMD is no longer standing still.

Insiders have started buying, signaling optimism, and shareholders should feel the same. This is a multi-trillion-dollar market, and it will create multiple multi-trillion-dollar companies.

There is a good chance that AMD will be one of them.

I remember reading your deep dive on AMD a while back which was crystal clear and so visionary. Congrats Daniel, I tip my hat to you!

Nvidia won’t be beaten but AMD looks like a great catch up trade